Arcl core location xcode tutorial1/5/2024

Occasionally, you’ll receive a far more accurate reading, like 4m or 8m, before returning to more inaccurate readings. Improved Location AccuracyĬoreLocation can deliver location updates anywhere from every 1-15 seconds, with accuracies which vary from 150m down to 4m. My recommendation would be to fine a nearby landmark which is directly True North from your location, place an object there using a coordinate, and then use the moveSceneHeading functions to adjust the scene until it lines up. Within the demo app, there’s a disabled property called adjustNorthByTappingSidesOfScreen, which accesses these functions, and, once enabled, allows tapping on the left and right of the screen to adjust the scene heading. With useTrueNorth set to true (default), it would continually adjust as it gets a better sense of north.

You should use these by setting eTrueNorth to false, and then pointing the device in the general direction of north before beginning, so it’s reasonably close. sceneLocationView.moveSceneHeadingAntiClockwise.sceneLocationView.moveSceneHeadingClockwise.To improve this currently, I’ve added some functions to the library that allow adjusting the north point: I’m confident that this issue can be overcome by using various AR techniques - it’s one area I think can really benefit from a shared effort. This is fine for maps navigation, but when placing things on top of the AR world, it starts to become a problem. One issue which I haven’t personally been able to overcome is that the iPhone’s True North calibration currently has an accuracy of 15º at best. If you need to use delegate features then you should subclass SceneLocationView.

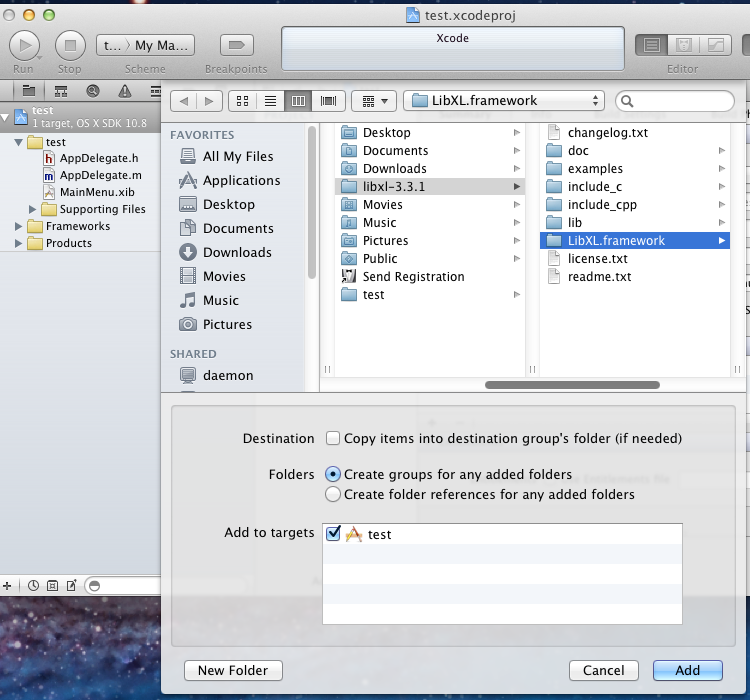

Note that while this gives you full access to ARSCNView to use it in other ways, you should not set the delegate to another class. SceneLocationView is a subclass of ARSCNView. It’s all fully documented to be sure to have a look around. The library and demo come with a bunch of additional features for configuration. the rendered directions of a MKRoute).Ĭlass ViewController: UIViewController, LNTouchDelegate The locationNodeTouched(node: LocationNode) gives you instead access to the nodes created from a PolyNode (e.g. Either of these properties will be filled in based on how the LocationAnnotationNode was initialized (using the constructor that takes UIImage or UIView). AnnotationNode is a subclass of SCNNode with two extra properties: image: UIImage? and view: UIView?. The annotationNodeTouched(node: AnnotationNode) gives you access to node that was touched on the screen. In order to get a notification when a node is touched in the sceneLocationView, you need to conform to LNTouchDelegate in the ViewController class. If you set the frame of your sceneLocationView, you should now see the pin hovering above Canary Wharf. There are two ways to add a location node to a scene - using addLocationNodeWithConfirmedLocation, or addLocationNodeForCurrentPosition, which positions it to be in the same position as the device, within the world, and then gives it a coordinate. addLocationNodeWithConfirmedLocation( locationNode: annotationNode) This library contains the ARKit + CoreLocation framework, as well as a demo application similar to Demo 1.īe sure to read the section on True North calibration. IOS 11 can be downloaded from Apple’s Developer website. So I’m opening up a Slack group that anyone can join, to discuss the library, improvements to it, and their own work.ĪRKit requires iOS 11, and supports the following devices: The improved location accuracy is currently in an “experimental” phase, but could be the most important component.īecause there’s still work to be done there, and in other areas, this project will best be served by an open community, more than what GitHub Issues would allow us.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed